|

I am a fourth-year Computer Science Ph.D. student at Princeton University, working with Prof. Ravi Netravali. I am affiliated with Princeton Systems for AI Lab (SAIL). I obtained my M.S.E. and B.S.E. in Computer Science at the University of Michigan, where I worked with Prof. Mosharaf Chowdhury and Prof. Harsha V. Madhyastha on projects related to networked systems, and B.S.E in Electrical and Computer Engineering from Shanghai Jiao Tong University |

|

|

My research interests are at the intersection of networked systems and machine learning. Recently, my work has focused on improving efficiency and scalability in systems that serve large language models and their applications, from monolithic to compound agentic systems, and from digital to physical AI. I am currently working on serving systems and application-level optimization for emerging physical AI. If you are interested, please feel free to reach out and chat about future potential collaboration! |

|

|

|

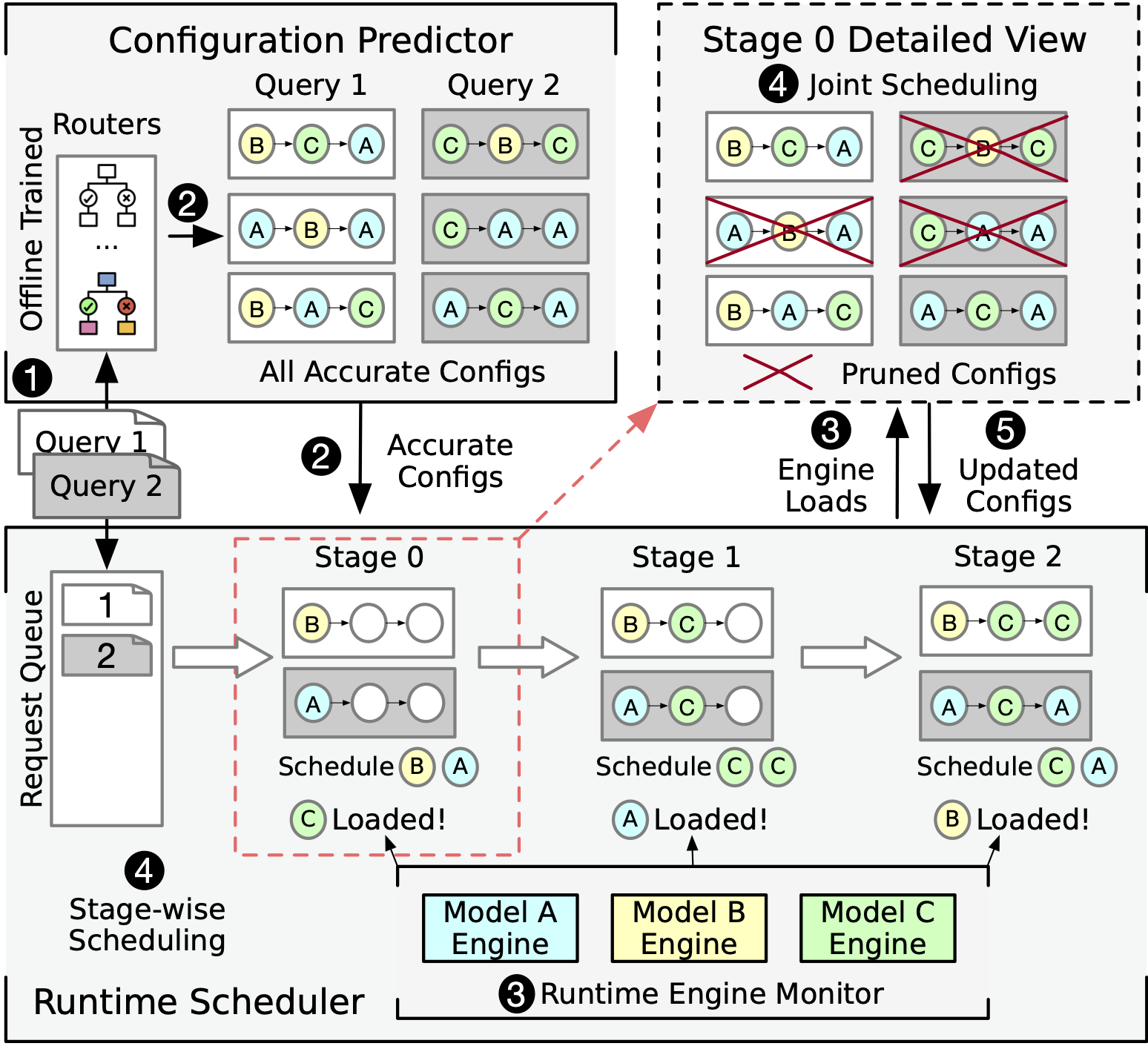

Yinwei Dai, Zhuofu Chen, Anand Iyer, Ravi Netravali arXiv, 2025 We present Aragog, a system that progressively adapts request configurations throughout execution for scalable serving of agentic workflows. |

|

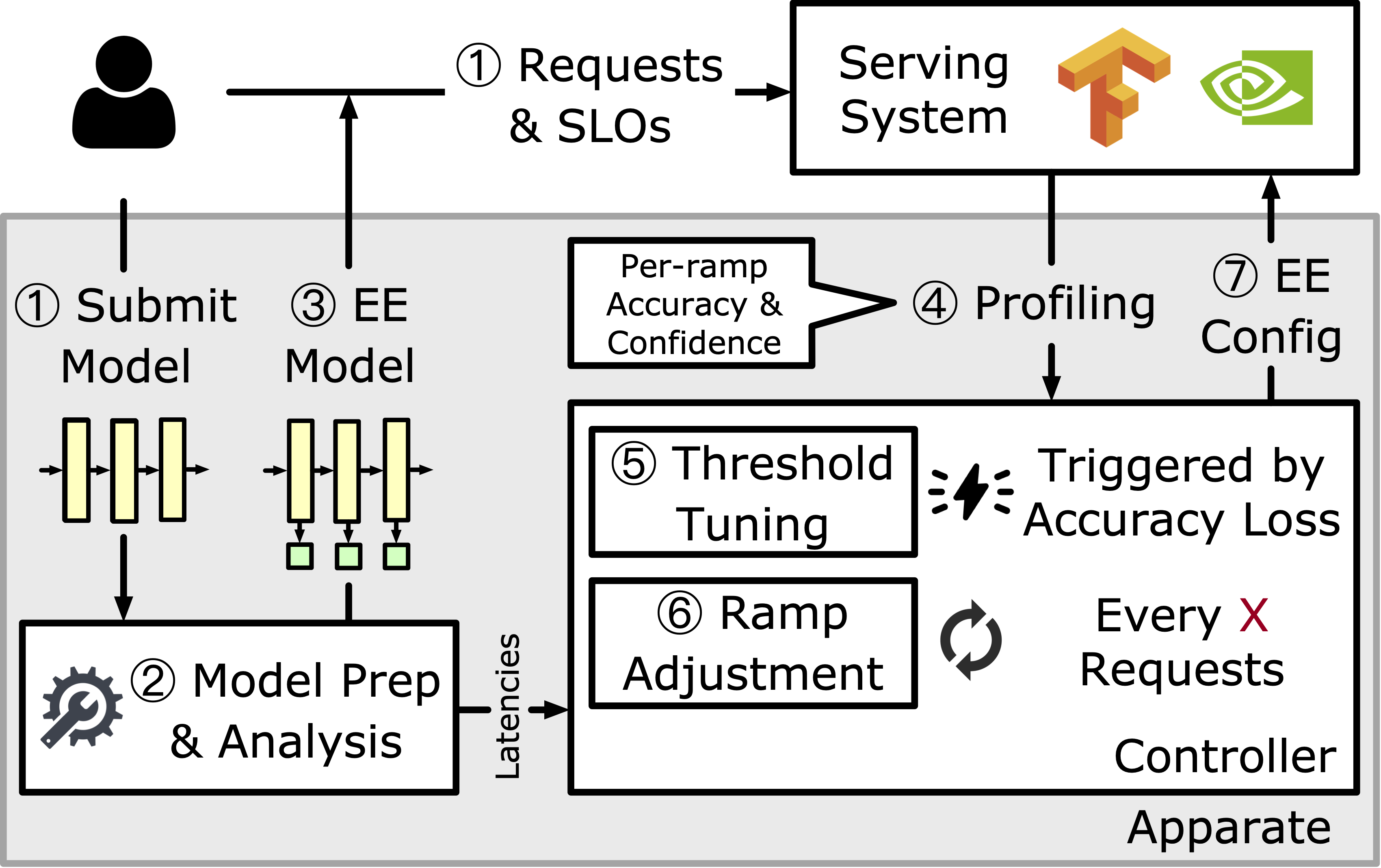

Yinwei Dai*, Rui Pan*, Anand Iyer, Kai Li, Ravi Netravali SOSP, 2024 Acceptance Rate: 17.34% / Github / Paper / Slides We present Apparate, the first system that automatically injects and manages Early Exits for serving a wide range of models. |

|

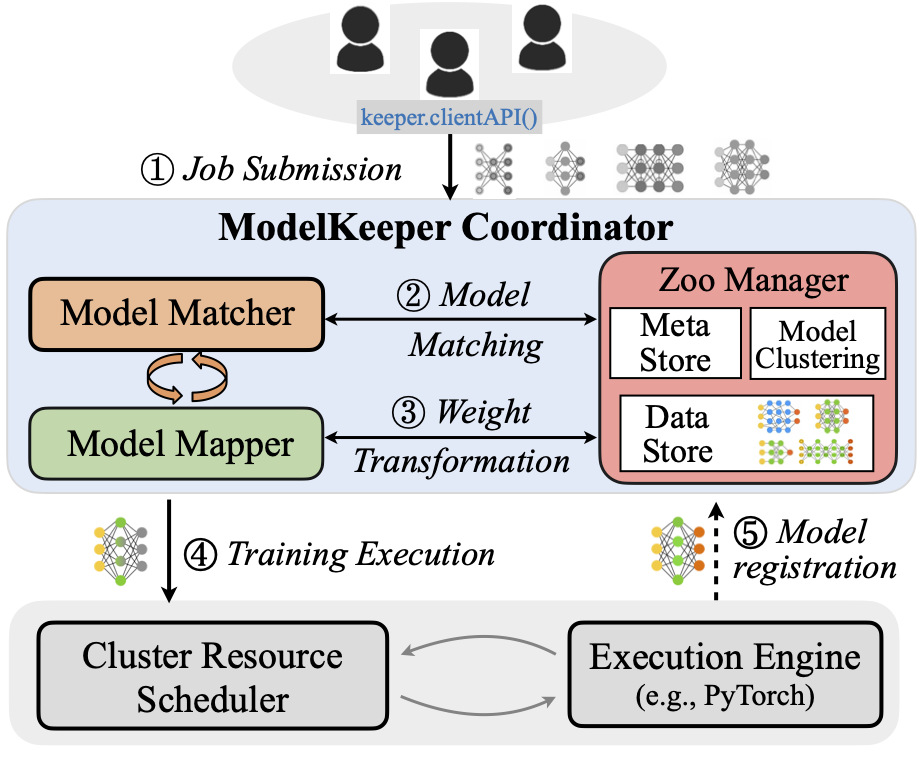

Fan Lai, Yinwei Dai, Harsha Madhyastha, Mosharaf Chowdhury NSDI, 2023 Acceptance Rate: 18.38% / Github / Paper / Talk We introduce ModelKeeper, a cluster-scale model service framework to accelerate DNN training, by reducing the computation needed for achieving the same model performance via automated model transformation. |

|

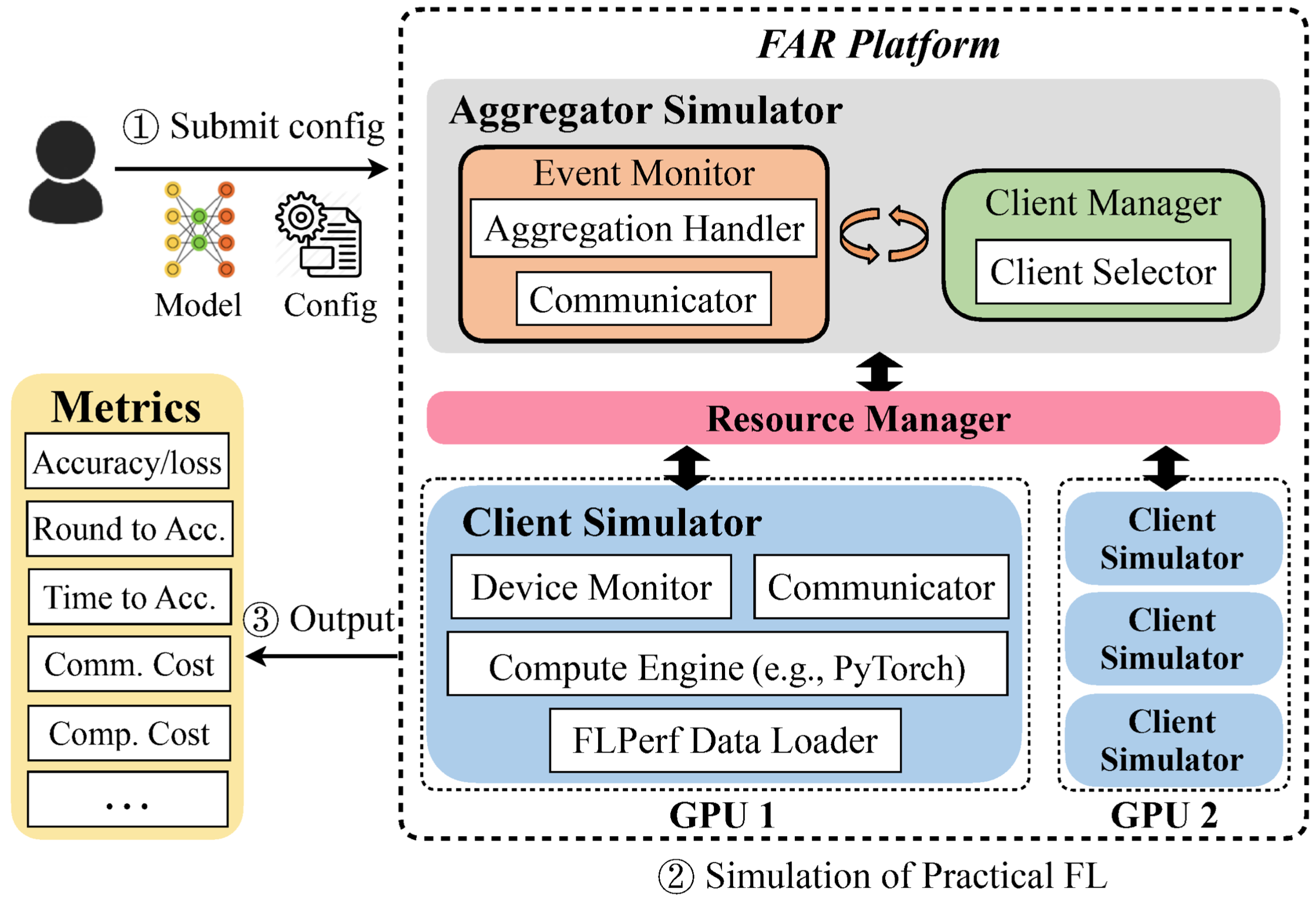

Fan Lai, Yinwei Dai, Sanjay Singapuram, Jiachen Liu, Xiangfeng Zhu, Harsha Madhyastha, Mosharaf Chowdhury ICML, 2022 Acceptance Rate: 21.94% / Website / Github Deployed at Linkedin Best Paper Award at SOSP ResilientFL 2021 We present FedScale, a diverse set of challenging and realistic benchmark datasets to facilitate scalable, comprehensive, and reproducible federated learning (FL) research. |

|

|

|

Meta, 2026/05 - 2026/08

Research Intern, AI and Systems Co-Design Team. |

|

Microsoft Research, 2025/05 - 2025/08

Research Intern, Intelligent Networked Systems Group. |

|

|

|

COS 316: Principles of Computer System Design, Fall 2023

COS 418: Distributed Systems, Winter 2024 |

|

EECS 442 Computer Vision, Winter 2022

EECS 489 Computer Network, Fall 2021 |

|

|

|

Conference Reviewer: NeurIPS (Main and D&B Track ) 2022-2025; MLSys 2026

Journal Reviewer: Transactions on Mobile Computing 2022, 2025 Artifact Evaluation Committee: SIGCOMM 2022, MLSys 2023 |

|

|

My name in Chinese:

If you want to chat with me, please send me an email! |